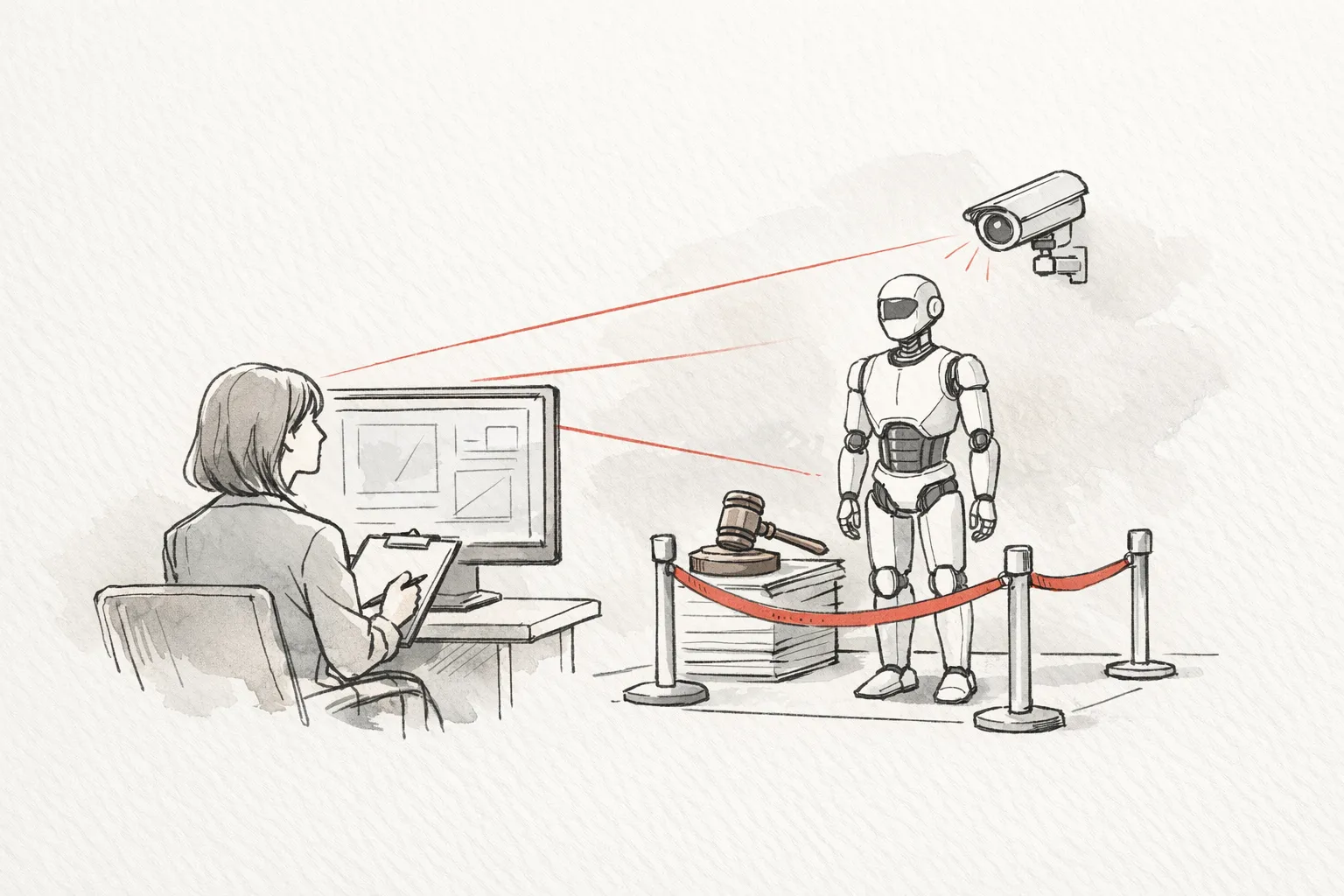

AI-Powered Compliance Monitoring for Hedge Funds Real-Time Governance follows a mid-sized hedge fund with several strategy teams that faced fragmented risk signals trapped in separate systems across an order management system execution management system CRM and a data lake. The customer aimed to achieve real-time governance a single auditable view of risk that could trigger timely investigations while preserving the speed and flexibility needed for research and trading decisions. By deploying AI powered monitoring that detects deviations as they happen encodes policy controls and centralizes transcripts and notes with structured tagging and search the program moved from manual reactive processes to an integrated workflow with governance baked in. The transformation matters because it reduces latency between signal and response improves signal quality and creates regulator ready documentation that is easy to reproduce for audits. With end-to-end integration across risk engines data sources and collaboration tools the fund gains ongoing oversight across desks while maintaining continuous decision support for traders and researchers.

Snapshot:

- Customer: Hedge fund services, mid-sized firm with multiple strategy teams

- Goal: Real-time governance with auditable evidence for regulators and boards

- Constraints: Siloed data across OMS EMS CRM and data lake, heterogeneous tech stack, need rapid escalation while preserving speed

- Approach: AI-powered real-time risk monitoring with policy aware reasoning centralization of transcripts live chaperoning and end-to-end integration

- Proof: Observations from risk and compliance staff before and after comparisons audit trail completeness regulator readiness assessments dashboards written evidence and escalation records

Context and Challenge: Fragmented Data and the Quest for Real-Time Compliance Governance

This case examines a mid-sized hedge fund with multiple strategy teams operating in a mixed technology environment that includes an order management system, execution management system, customer relationship management, and a centralized data lake. Risk signals are dispersed across these systems, each with its own data model and identifiers, making cross-system correlation difficult. Decision workflows relied on manual reviews and static dashboards, which created latency between signal detection and action. The firm aimed to shift to real-time governance with auditable evidence, enabling regulators and boards to review decisions without slowing research and trading tempo. The transformation needed to preserve the speed of insight while introducing structured, traceable controls and centralized documentation.

The environment is characterized by data silos, legacy tooling, and a heterogeneous tech stack that complicates integration and scalability. IOI and MNPI related risks require rapid, standardized escalation paths, yet governance practices lacked a shared taxonomy and automated enforcement. Regulatory requirements span multiple jurisdictions, demanding adaptable policies and rigorous change management. Across desks, visibility into risk decisions remained fragmented, and live monitoring of high risk calls was not cohesively coordinated. The stakes included potential regulatory findings, reputational harm, and elevated operational risk if the organization could not demonstrate a clear, auditable governance process.

The challenge this firm faced was not merely implementing new technology but rethinking governance as an end-to-end capability. Real-time risk signals, policy compliance, and evidence collection had to co-exist in a unified workflow that could be audited, defended in examinations, and learned from to improve over time.

The challenge

The core problem is how to unify dispersed risk signals into a single, real-time governance framework that encodes policy controls, supports immediate escalation, and provides auditable evidence across trading research and operations. The solution must preserve decision speed, enable cross-desk collaboration, and remain adaptable to regulatory changes while delivering regulator-ready documentation.

What made this harder than it looks:

- Data fragmentation across OMS EMS CRM and data lake hindering reliable cross-system matching

- Inconsistent identifiers and data quality issues that reduce signal fidelity

- Balancing real-time monitoring with the need for thorough validation and governance

- No standardized taxonomy for IOI MNPI risks complicating tagging and routing

- A lack of centralized auditable trails across multiple tools and data sources

- High alert noise without clear prioritization leading to alert fatigue among teams

- Regulatory change management across jurisdictions requiring rapid policy updates and testing

- Limited cross-desk visibility which impedes coordinated risk decisions

Strategic approach for Real Time Compliance Governance

The team began with a governance first mindset, choosing to anchor the program in policies and human oversight before chasing full automation. This meant defining risk limits, escalation protocols, and an auditable decision trail that could be trusted by regulators and boards while still enabling rapid research and trading. The initial focus was on creating a repeatable, defensible workflow that could adapt to changing regulations and desk practices without sacrificing the speed required by live markets. By embedding policy aware reasoning into AI agents and grounding outputs to source documents, the organization laid a foundation for trustworthy automation rather than a purely technical upgrade.

What they explicitly did not do in the early phase was to attempt a sweeping end-to-end replacement of all risk and compliance processes. Instead, they selected high-value, low-risk use cases and conducted a controlled pilot on a single desk to prove feasibility and value. This cautious approach reduced disruption, allowed real-world validation of governance controls, and created a blueprint for scaling with clear consent from business leaders and compliance functions. The decision to pilot first also enabled rapid iteration on data schemas, tagging taxonomy, and escalation paths before wider rollout.

The strategy combined a unified data foundation with AI driven real time monitoring, policy aware reasoning, retrieval augmented generation, and centralized transcripts with robust tagging and search. The approach balanced automation with human in the loop at crucial decision points, preserving explainability and accountability while enabling faster signal-to-action cycles. It also prioritized regulator ready documentation and immutable audit trails to improve audit readiness from day one, setting the stage for broader adoption across desks and jurisdictions.

Decision tradeoffs

| Decision | Option chosen | What it solved | Tradeoff |

|---|---|---|---|

| Data architecture | Build a unified data foundation with standardized identifiers | Reliable cross system matching across OMS EMS CRM and data lake | Increased upfront effort and coordination across platforms |

| Governance model | Policy aware AI with human in the loop for high risk actions | Safer decisions and clear accountability | Potential slower response on high velocity events requiring human input |

| Scope of automation | Start with high value low risk use cases and pilot on one desk | Validated value with minimal disruption and faster learning | Limited initial scope may delay full scope benefits |

| Information grounding | Centralized transcripts with tagging and search | Faster investigations and reproducible evidence | Requires governance around tagging accuracy and data retention |

| Audit readiness | Immutable logs and regulator ready documentation | Provable compliance and smoother audits | Storage and governance overhead for long term retention |

| Live monitoring scope | Real time monitoring with auto escalation | Immediate risk containment and timely responses | Resource intensity for ongoing live supervision |

Implementation: Actionable steps to deliver Real Time Compliance Governance

To establish real time governance the team anchored the rollout in governance first principles and then built integrated workflows that preserve human judgment. They began by mapping the data landscape across the order management system execution management system customer relationship management and the data lake to create a single reference for risk signals. This foundation enabled policy aware reasoning to operate in real time while maintaining escalation controls and an auditable trail. Centralized transcripts with tagging and search capabilities were introduced to ensure evidence can be reproduced and reviewed. The plan favored a measured rollout with a pilot desk to validate assumptions before scaling across desks and jurisdictions.

-

Map data landscape and define unified identifiers

The team conducted an inventory of data sources across OMS EMS CRM and the data lake and established a common reference model so signals can be correlated in real time. This step clarified where data overlaps exist and how identifiers align across systems. The effort reduced data fragmentation and set the stage for reliable cross system matching.

Checkpoint: Cross system identifiers are standardized and a reference data model is established.

Common failure: Data owners disagree on the reference model causing continued mismatches.

-

Deploy monitoring with policy aware reasoning

Real time risk monitoring was configured to process signals as they arrive and apply policy rules with escalation gates. The approach ensures actions stay within risk thresholds while enabling rapid response when anomalies appear. This alignment between signals and controls is what makes ongoing governance feasible.

Checkpoint: Real time alerts begin to reflect policy boundaries and escalation paths.

Common failure: Rules become too rigid or too loose leading to missed alerts or over escalations.

-

Establish guardrails with escalation and human in the loop

Escalation thresholds were codified and human review points were embedded for high risk actions. This combination preserves accountability while allowing speed in low risk situations. The guardrails create a defensible workflow that regulators can audit.

Checkpoint: Escalation paths are triggered consistently and documented reviews occur at required decision points.

Common failure: Thresholds drift over time without periodic review resulting in inconsistent handling.

-

Centralize transcripts tagging and search across tools

A centralized repository for transcripts notes and related materials was created with a standardized tagging taxonomy. This enables rapid filtering and grounded review across multiple tools. It also improves the ability to reproduce investigations and support regulatory inquiries.

Checkpoint: Transcripts are searchable by tag and accessible to authorized reviewers across desks.

Common failure: Tagging quality declines causing missed connections between related documents.

-

Enable live chaperoning for high risk calls with escalation workflows

Live monitoring was enabled for high risk calls with an escalation workflow that routes concerns to the appropriate compliance or risk function in real time. This capability helps contain issues before they escalate and provides immediate evidence of actions taken.

Checkpoint: High risk calls trigger documented real time interventions and follow ups.

Common failure: Real time oversight is incomplete leading to gaps in coverage during critical moments.

-

Integrate OMS EMS data lake and risk engines for end to end workflow

Integration connected source systems with risk engines to support an end to end governance workflow from signal detection through action documentation. The integration ensures consistent data lineage and enables cohesive reporting for management and regulators.

Checkpoint: Data flows between systems are validated and end to end traceability is established.

Common failure: Integration gaps create orphaned data or incomplete audit trails.

-

Build immutable audit trails and regulator ready documentation

Tamper resistant logs and standardized documentation templates were created to capture reasoning, actions, and outcomes. This supports regulator examinations and internal governance reviews with clear traceability to source materials.

Checkpoint: Audit trails are complete and consistent with regulatory expectations.

Common failure: Logs are incomplete or stored in silos making retrieval difficult during audits.

-

Run a controlled pilot on a single desk and plan scale

The implementation proceeded with a controlled pilot on a single desk to validate processes and governance controls before wider deployment. The pilot provided practical feedback on workflows and helped refine data schemas and escalation criteria. The learning from the pilot informs the broader rollout plan while preserving risk management discipline.

Checkpoint: Pilot results confirm readiness for scale and highlight remaining gaps.

Common failure: Pilot findings are not integrated into the scaling plan leading to repeated adjustments later.

Results and Proof: Real Time Governance Deliveries in Practice

The implementation of real time compliance governance brought together disparate risk signals into a single, continuously monitored workflow. Risk and compliance teams gained a clearer picture of what's happening across trading research and operations, enabling faster decision making without sacrificing the rigor of review. The program also established auditable evidence that can be reproduced for regulator inquiries and internal governance reviews, while maintaining the speed required for live markets. Across desks, stakeholders observed improved signal quality, more consistent escalation, and stronger alignment with evolving regulatory expectations. The outcomes were evaluated through qualitative observations, controlled comparisons of process times, and regulator-facing documentation that could be audited.

In addition to process improvements, the initiative produced tangible evidence of enhanced governance maturity. Centralized transcripts and structured tagging simplified investigations and cross-tool analysis, while live monitoring during high risk moments provided proactive containment. Overall, the approach demonstrated that governance driven by policy aware AI, when paired with human oversight, can sustain speed and reliability at scale and support ongoing regulatory readiness.

| Area | Before | After | How it was evidenced |

|---|---|---|---|

| Risk signal visibility | Signals fragmented across OMS EMS CRM and data lake with limited cross desk view | Unified, real time governance across desks and data sources | Observations from risk and compliance staff, dashboards demonstrating cross desk visibility |

| Audit trails and documentation | Audit trails incomplete and not standardized | Immutable audit trails and regulator ready documentation | Regulator readiness assessments and mock audits, documented reviews |

| Transcript centralization | Transcripts scattered across tools with poor tagging | Centralized transcripts with tagging and search capabilities | Central repository usage, search effectiveness demonstrated by reviewers |

| Escalation efficiency | Escalation gates manual with slower response | Policy aware real time escalation with human in the loop | Escalation logs and reviewer notes, time-to-escalate observations |

| Live monitoring coverage | No or limited live monitoring for high risk calls | Live chaperoning and real time intervention during high risk calls | Event records of live monitoring and subsequent actions |

| Data integration and lineage | Data gaps and orphaned data across systems | End to end data flow with traceability | Data flow validation and end to end traceability demonstrations |

| Regulatory readiness | Regulatory inquiries harder to address, audits more manual | Improved regulator readiness and easier audit alignment | Mock audits and regulator-facing documentation reviews |

| Compliance overhead | High manual overhead on repetitive tasks | Automation of repetitive tasks reducing manual effort | Staff workload observations and coverage of automated tasks |

Lessons and a Replicable Playbook for Real Time Compliance Governance

This section distills actionable insights from a mid sized hedge fund initiative that bridged fragmented risk signals and legacy tooling through governance driven AI. The core idea was to establish a policy oriented backbone before chasing full automation, ensuring that real time monitoring could operate within clearly defined risk boundaries while generating auditable evidence for regulators and internal governance. By grounding AI outputs to source documents and centralizing transcripts with structured tagging, the program created explainability and reproducibility at scale. The outcome was a workable balance between rapid signal processing and rigorous review, with a path to scale across desks and jurisdictions that preserves a disciplined control environment.

Transferable patterns emerge around data unification, incremental scope, and robust governance. Start by mapping the data landscape and standardizing identifiers to enable real time correlation across OMS EMS CRM and data lakes. Prioritize high value low risk use cases and validate them through a controlled pilot on a single desk before broader rollout. Embed guardrails and human in the loop for high risk actions, and build end to end data lineage and immutable audit trails to support regulator readiness. Invest in centralized transcript tagging and search, live monitoring for critical moments, and strong change management to sustain adoption as requirements evolve.

The resulting playbook offers a concrete path to replicate these gains in other firms. With governance at the center, organizations can accelerate learning while keeping decision speed intact. The emphasis on observability, documented evidence, and cross desk collaboration provides a durable foundation for ongoing regulatory alignment and governance maturity as the business grows and regulatory expectations shift.

If you want to replicate this, use this checklist:

- Define governance objectives including escalation protocols and auditable evidence

- Map the data landscape across OMS EMS CRM and the data lake

- Create a unified reference model with standardized identifiers

- Encode policy aware rules and establish escalation gates

- Design guardrails with clear human in the loop decision points

- Centralize transcripts notes and related materials with a tagging taxonomy

- Implement centralized search across tools for rapid investigations

- Establish end to end data lineage and traceability checks

- Build immutable audit trails and regulator ready documentation

- Plan a controlled pilot on a single desk to validate workflows

- Develop live monitoring capabilities for high risk moments

- Prepare integration with core platforms to preserve data continuity

How Real Time Governance Differs From Traditional Risk Monitoring

How does real time governance differ from traditional risk monitoring

Real time governance differs from traditional risk monitoring by moving from periodic reviews to continuous vigilance across desks and data sources. It uses policy aware AI to observe signals as they arrive, automatically applying risk thresholds and escalation gates while preserving human oversight for high impact decisions. The approach creates auditable trails and source grounded reasoning that regulators expect, while enabling traders and researchers to respond quickly to evolving conditions. The focus is on speed without sacrificing traceability, not merely on automation.

What data sources were integrated to support unified risk signals

Integrated data spans the order management system and execution management system alongside customer relationship management platforms and a centralized data lake. Central risk engines support the interpretation of signals, while transcripts and notes are stored with standardized tagging to enable cross system correlation. This architecture reduces silos and improves signal fidelity, providing a single, auditable view that informs timely risk decisions across desks.

How are IOI and MNPI risks handled and escalated

IOI and MNPI risks are handled through a defined taxonomy and policy driven routing. The system flags topics of interest and potential insider information, attaches policy tags, and triggers escalation to compliance or risk owners. Real time alerts include contextual references from source documents, with escalation paths that preserve accountability. Decisions are supported by auditable notes and the ability to reproduce the rationale behind each escalation or action taken.

What is the role of human in the loop in high risk events

Human in the loop is embedded at key decision points to preserve judgment and accountability. The system auto flags high risk events and routes them to designated reviewers for approval before action. This ensures speed for routine signals while maintaining robust governance for critical steps such as order placements or investor communications. The approach balances automation with expert oversight to meet both performance and regulatory expectations.

How are transcripts and evidence centralized and made accessible

Transcripts and evidence are centralized in a single repository with a consistent tagging taxonomy and full text search. The centralization enables rapid filtering across tools and ensures that review notes decisions and source references are accessible to authorized reviewers across desks. Grounding responses to the originating documents supports auditability and regulator inquiries by providing traceable context for each risk signal and action.

How is regulatory readiness demonstrated to auditors

Regulatory readiness is demonstrated through regulator ready documentation immutable audit trails and mock audits. The system records decision rationales and actions with timestamps maps them to source materials and provides evidence packages that can be reviewed by auditors. This ensures that governance processes are defensible under examination and supports ongoing compliance with multi jurisdictional requirements. Documentation is prepared in standardized formats to streamline examinations.

What governance controls were put in place to manage changes

Governance controls include policy encoded rules escalation gates change management processes and formal approval workflows. A living control framework ties risk limits to automated monitoring while versioned policies and rollback mechanisms enable safe updates. The approach emphasizes traceability of changes role based access and continuous testing to ensure governance remains aligned with evolving regulations and desk practices. Regular reviews ensure controls adapt without compromising stability.

How is adoption across multiple desks ensured

Adoption across multiple desks was driven by a staged rollout beginning with a controlled pilot accompanied by targeted training and clear success criteria. A governance council oversaw the expansion ensuring alignment with risk appetite and regulatory expectations. Cross desk communication standardized workflows and shared memory of policies enabled smoother onboarding and consistent use of the governance framework across teams. Ongoing support fosters sustained engagement and proficiency.

What challenges typically arise during scale and how are they mitigated

Scale introduces data fragmentation drift in model behavior and alert fatigue from excessive signals. To mitigate this, the program enforced data unification ongoing model validation and continuous improvement of tagging taxonomy. The team also established observability and audits to maintain visibility into how the system behaves at scale ensuring reliability and maintainability while preventing governance drift as requirements evolve.

What lessons translate to other hedge fund risk and compliance programs

Transferable lessons emphasize governance first incremental pilots and robust data unification. Centralizing signals with clear tagging improves speed and reproducibility while human oversight preserves judgment. Observability and auditability build regulator confidence and cross desk collaboration expands coverage while reducing silos. The approach can be applied to other risk and compliance programs by adapting data workflows governance controls and escalation policies to fit different regulatory environments.

FAQPage Schema Snippet

Closing Thoughts: Sustaining Real Time Governance in Hedge Fund Compliance

The journey from fragmented risk signals to a unified real time governance capability demonstrates how governance anchored in clear policies and human oversight can coexist with rapid decision making. By weaving policy aware AI with auditable evidence, the program preserves the rigor regulators expect while supporting the speed needed for live research and trading. The outcome is a framework that remains adaptable to evolving regulations and desk practices without sacrificing reliability.

Key enablers included a unified data foundation, standardized identifiers, centralized transcripts with structured tagging, and end to end data lineage. Centralized search and groundings to source documents made investigations reproducible and audit ready. Live monitoring during high risk moments provided proactive containment and a continuous line of sight across desks and data sources.

Adoption benefited from a staged approach, with pilots that validated governance controls before broader rollout. Human in the loop preserved judgment during critical decisions, while governance councils and cross desk collaboration extended coverage and consistency. Observability and continuous improvement ensured the program evolves in step with regulatory expectations and business needs.

Next steps: begin with mapping your data landscape and governance requirements to design a controlled pilot that tests end to end workflows across key desks, with clear escalation paths and a plan for audit ready documentation.